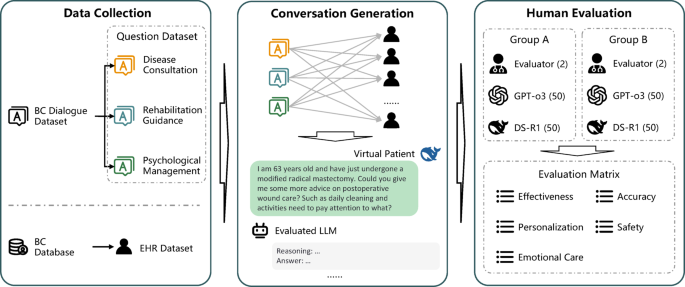

In this study, we systematically evaluated the performance of mainstream LLMs in the scenarios of out-of-hospital management for breast cancer patients. We simulated 100 VPs from real breast cancer cases, and engaged multiple rounds of dialogues under out-of-hospital management scenarios with GPT-o3 and DS-R1. The performance of LLMs was evaluated in five dimensions, including effectiveness, safety, accuracy, personalization, and emotional care. The results showed that both LLMs had satisfactory performance in out-of-hospital management. Compared to GPT-o3, DS-R1 behaved better in all dimensions according to human specialists except in Rater 1’s emotional care, Rater 2’s safety, Rater 3’s safety, and Rater 4’s effectiveness. Also, DS-R1generated more tokens in identical time with less economic cost, and it also had shorter response time than GPT-o3. Therefore, this study suggested that LLMs could be deployed in the scenarios of out-of-hospital management for breast cancer patients, DS-R1seems to have better performance compared to GPT-o3.

The LLMs’ role in out-of-hospital management of cancer patients remains in debate. Our study suggested that the majority of human physicians rated LLMs’ responses at the score of 3, which means satisfactory performance in out-of-hospital management. However, still there are existing problems, like hallucinatory responses. During the evaluation, we occasionally encountered hallucinatory responses (accounting for 2.0%, 4/200), which could severely mislead patients and cause hazardous events. For instance, LLMs sometimes suggested a HER2 negative patient to receive target therapy, or suggested a stage 0 VP to receive chemotherapy in our study. Though the case is rare, it could result in irretrievable consequences. This is in accordance with another study employing GPT-3.5 and GPT-4. They conducted an intrinsic evaluation study rating 60 GPT-powered VP-clinician conversations to evaluate the clinical performance of LLMs and to rate the quality of dialogues and feedback. The result showed that the quality of LLMs-generated ratings of feedback is similar to human physicians, but it still has detractors like lower authenticity, verbose vocabulary and failure to mention important weaknesses or strengths10. Similar conclusions were also generated by other studies focusing on Alzheimer’s disease management11 and pain management12. To cope with the inaccuracy of response by LLMs, Ge et al. suggested using liver disease-specific LLM “LiVersa”, which enhance the LLMs with retrieval-augmented generation (RAG). The LiVersa demonstrated better performance than GPT-4 in answering hepatology-related questions13. Therefore, we believe in the promising future of LLMs in out-of-hospital management of cancer patients, however, before it could be universally deployed, we may need to address problems like hallucinatory responses. RAG specific LLM could be a future direction to improve the performance of LLMs in various medical scenarios.

Both GPT-o3 and DS-R1demonstrated substantial potential in assisting out-of-hospital management, but DS-R1 had better overall performance and less cost than GPT-o3 in our study. As newly emerged AI, DS-R1has little research in breast cancer, whereas GPT has the most applications among existing LLMs in multiple scenarios of the practice. One retrospective, cross-sectional study reported that over one-third recommendations for breast, prostate, and lung cancer by GPT-3.5 were not consistent with the standard care set provided by the National Comprehensive Cancer Network (NCCN)14, though the updated GPT-4 has significant improvement in accuracy and details of recommendations15. Another cross-sectional study assessed the response to the 5 most searched queries in Google by 4 mainstream AIs. GPT-3.5 demonstrated relatively high readability (DISCERN score) and understandability (PEMAT score), but relatively low actionability6. This is consistent with our findings that GPT has satisfied accuracy, personalization, safety, effectiveness and emotional care in out-of-hospital management according to human specialists, though it is inferior to DeepSeek-R1. Further, DS-R1generates more tokens than GPT-o3 at similar time, though it has higher cost in total. Compared to human being, LLMs application in remote care management could save tremendous resources16, however, few study make comparison among existing LLMs in the field of cost-effectiveness17. We believe that with the fast iteration of LLMs, the cost of chatbot derived from them will continuously decrease, with significantly increased efficacy.

Although LLMs have demonstrated promising applications in the out-of-hospital management for breast cancer, limitations are still exist. According to the human specialists, the responses of involved LLMs have moderate risk of misleading for the patients (Likert scale 2.92/2.77). The reason for the misleading risk could be derived from wrong suggestions based on VPs, which is consistent with previous literatures indicating the limited applicability of LLMs18,19. Deng et al. reported that GPT-4 has superiority over GPT-3.5 and Claude2 in terms of quality, relevance and applicability in the analyses of breast cancer cases, however, the applicability remains limited according to human raters18. Similarly, another study also reported LLM makes considerably fraudulent decisions at times, which could mislead multidisciplinary tumor board for breast cancer (MTB) decisions19. Therefore, LLMs are not yet ready for the full application in out-of-hospital management for breast cancer, more research is warranted in the improvement of accuracy of responses in future.

Our study indicated that LLMs could provide personalized, empathetic, and accurate suggestions in the out-of-hospital management for breast cancer patients. LLMs could identify the emotional requirement of the patients and provide support for the psychological problems. This is consistent with a previous study that chatbot based on GPT could generate empathetic, quality and readable responses to patient questions compared to human physicians in social media20. Another study reported that Chatbot “Vivibot” could deliver positive personalized psychology skills to young adults who have undergone cancer treatment, which could significantly reduce anxiety21. Therefore, LLMs could be a strong supporter of physicians as well as cancer patients during the treatment and out-of-hospital management.

Our study has significant advantages of randomized, and multi-phase study design, LLM-human physician evaluation and validation for the results. However, we also confess several limitations. First, we only evaluated two most up-to-dated reasoning enhanced LLMs, other LLMs like Grok3, were not included in the study. Second, only 10 VPs were simulated for the test, though over 100 question datasets were created, still the sample size is limited. Third, we included 4 human physicians participating in the evaluation of the responses from LLMs, inter-person heterogeneity could also affect the results. However, we employed Cohen’s Kappa test to reduce the potential bias. In Dataset A, the Cohen’s Kappa test results were 0.52 for DS-R1 scores (P < 0.01) and 0.68 for GPT-o3 scores (P < 0.01). In Dataset B, the results were 0.80 for DS-R1 scores (P < 0.01) and 0.54 for GPT-o3 scores (P < 0.01). Fourth, limited by the time of follow-up, we have no events of prognosis, which restricts our exploration of the association between LLM deployed out-of-hospital management and the prognosis of the disease. Last, our study is a single center study, additional validation is required.

link