Data Sources and Study Oversight

We used Medicaid eligibility files, which contain all people covered by Medicaid whether or not they have received healthcare, to predict non-receipt of care, specifically non-closure of HEDIS quality gaps29. We specifically analyzed data from the Transformed Medicaid Statistical Information System Analytic Files (TAF) spanning 2017–201930. The TAF data include patient demographics, eligibility information, individual-level social determinants of health metrics (e.g., Temporary Assistance for Needy Families recipient status, household income; described in detail in Supplementary Note 1), geographic information (county of residence), and comprehensive claims data for outpatient, inpatient, long-term support, pharmacy, and other healthcare services, encompassing both fee-for-service and managed care. We included data from states meeting minimum quality standards defined by Medicaid.gov’s Data Quality Atlas during the study period31. State-level enrollment benchmarks, claims volume, and data completeness were assessed to ensure data quality (detailed quality criteria in Supplementary Note 2). The final analytic sample comprised 14,178,331 Medicaid beneficiaries residing across 1563 counties within 25 states and Washington, D.C. We obtained community-level social determinants of health data from the Agency for Healthcare Research and Quality (AHRQ) Social Determinants of Health Database32. This study adhered to the Transparent Reporting of a multivariable prediction model for Individual Prognosis or Diagnosis (TRIPOD) guidelines (Supplementary Table 1)33

Ethics Approval and Consent to Participate

This study utilized de-identified administrative claims data from the Transformed Medicaid Statistical Information System Analytic Files (TAF) spanning 2017–2019. The research protocol was reviewed and approved by the Western Institutional Review Board (Princeton, New Jersey), which granted a waiver of informed consent due to the retrospective nature of the study and the use of de-identified data. All procedures were conducted in accordance with the ethical standards of the institutional and national research committees and with the 1964 Helsinki Declaration and its later amendments or comparable ethical standards.

Study Population and Follow-up

The study population included all Medicaid beneficiaries who met the standard national inclusion and exclusion criteria for at least one of the nine selected quality measures–not only the subset of patients with claims or electronic health record data. Most measures required 36 months of continuous Medicaid enrollment from 2017-2019. To assess potential selection bias from this requirement, we conducted a sensitivity analysis comparing the demographics of beneficiaries with 36 months of continuous enrollment to those with at least one month of enrollment in 2017 (results in Supplementary Table 12). We excluded beneficiaries dually enrolled in both Medicare and Medicaid, as Medicare serves as the primary payer for these individuals, resulting in potentially incomplete medical claims in TAF. Additionally, dual-eligible beneficiaries typically receive separate care management services with different outreach protocols.

Outcomes

We assessed quality of care using the national Healthcare Effectiveness Data and Information Set (HEDIS) measures2. HEDIS comprises a standardized set of evidence-based performance measures encompassing a range of recommended services, from cancer screenings to medication adherence for chronic conditions. Our study focused on predicting non-completion of a HEDIS quality measure—specifically, the probability a patient did not receive a recommended service based on their age, biological sex, and medical history. Detailed definitions of inclusion and exclusion criteria for each measure, along with specific calculation methods following National Committee for Quality Assurance (NCQA) guidelines29, are provided in Supplementary Note 3-4.

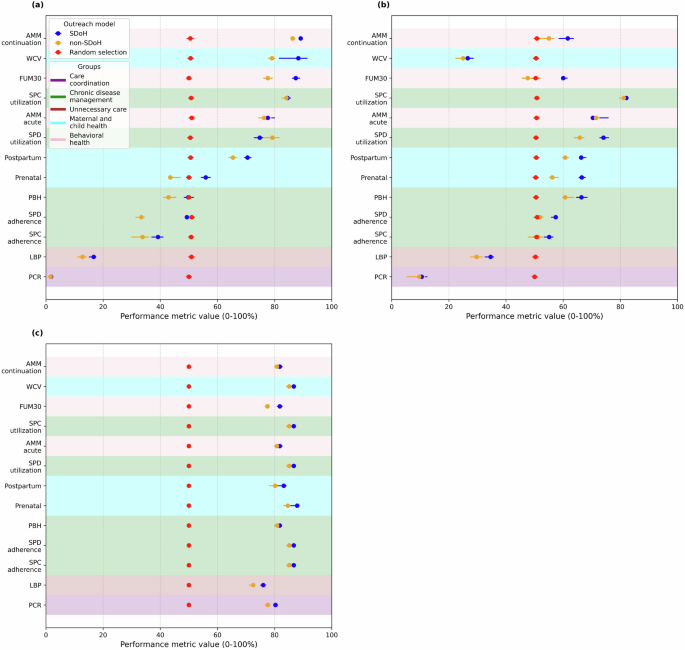

We developed separate prediction models for nine HEDIS measures chosen based on three criteria: inclusion across state Medicaid agency quality assessments34, relevance to diverse Medicaid patient populations (pediatrics, pregnant women, older adults), and coverage of multiple quality domains (prevention, treatment, and avoidance of low-value care). The measures were: (1) child and adolescent well-care visits (WCV); (2) prenatal and postpartum care visits (PPC); (3) follow-up after emergency department visits for mental illness (FUM30); (4) avoidance of unnecessary imaging for routine lower back pain (LBP); (5) all-cause hospital readmissions (PCR); (6) antidepressant medication management (AMM); (7) persistence of beta-blocker treatment after heart attack (PBH); (8) statin therapy for patients with cardiovascular disease (SPC); and (9) statin therapy for patients with diabetes (SPD). We focused on the subset of quality measures included most commonly in Medicaid state withhold (financial penalty) specifications within contracts to health plans. These do not include some preventive screening measures—such as lead, cervical, and colorectal cancer—due to the need for laboratory, pathology, radiology, or procedural data that are only available from a biased subset of patients who have access to such services. Our goal was to ensure inclusion of patients who may have poor access to the healthcare system, thereby enhancing generalizability, and align with state policymaker priorities for quality metrics at a population level.

To validate our HEDIS measure coding and ensure alignment with standard practice, we compared aggregate state-level results from our data with publicly available reports on HEDIS outcomes among Medicaid populations from the NCQA (detailed validation results in Supplementary Note 5). To reflect the heterogeneity of these metrics, we refer to them as ‘quality measures’ throughout this manuscript, with a subset related to primary prevention.

Predictor Variables

We constructed a comprehensive set of predictor variables from the TAF data, encompassing demographics, diagnoses, therapeutics, healthcare utilization, and social determinants of health factors. Demographic variables included age, sex, race/ethnicity (included to assess potential effects of structural racism on quality measure completion), and state of residence (using fixed effects to control for unmeasured state-level variation). We captured clinical information using standardized coding systems: Clinical Classifications Software Refined (CCSR) for diagnoses35, Restructured Berenson-Eggers Type of Service (BETOS) for types of care36, Centers for Medicare & Medicaid Services (CMS) specialty classifications for provider specialties37, and CMS Prescription Drug Data Collection codes for medications38.

We quantified healthcare utilization through multiple metrics: counts of acute care visits (emergency department visits and hospitalizations), including ambulatory-sensitive conditions identified through the NYU Emergency Department algorithm and AHRQ Prevention Quality Indicators39,40. These methods allowed us to distinguish between emergent and non-emergent encounters, capturing both high-acuity episodes and outpatient-manageable conditions such as respiratory and gastrointestinal illnesses. To capture temporal patterns, we included the monthly rate of change in acute care visits and medication fills during 2017. We identified emergency department visits using Current Procedural Terminology, revenue, and place-of-service codes, while hospitalizations were defined as contiguous ED visits and inpatient admissions41.

We incorporated individual- and county-level social determinants of health measures based on established conceptual models linking social factors to healthcare utilization42. Individual-level measures included household size, income, English proficiency, marital status, citizenship status, and receipt of public assistance programs. County-level factors encompassed healthcare infrastructure (availability of substance use treatment facilities, mental health services, advanced practice providers, and urgent care), as well as area-level socioeconomic indicators and environmental factors (e.g., air quality, heat index; full definitions in Supplementary Note 6 and Table 2).

Because claims data capture only individuals with observed healthcare utilization, the model is limited to beneficiaries who have had at least some engagement with the healthcare system. However, the input data include individuals with minimal prior contact, and features such as missingness in clinical histories and enrollment gaps were treated as predictive signals. In line with NAM recommendations, missing data were retained as a feature rather than removed or simply imputed, enabling the model to incorporate patterns of under-documentation and exclusion.

Some individual-level social need data—such as income, education, or food insecurity—were unavailable for all members and were supplemented where possible using county-level proxies. Variables with missingness exceeding 20% were either excluded or imputed using multivariate imputation, depending on predictive importance and coverage. A detailed list of variable sources, missingness, and imputation methods is provided in Supplementary Table 2. Following National Academy of Medicine guidelines6,16, missingness itself was often retained as a feature to capture patterns of under-documentation and structural exclusion that may hold predictive value43.

Model Development and Comparison

To evaluate the added predictive value of incorporating social determinants of health for forecasting quality measure non-completion, we developed two sets of prediction models for each of the nine outcome measures: (1) a baseline clinical model incorporating patient demographics, diagnoses, therapeutics, and healthcare utilization; and (2) an expanded social determinants model incorporating all variables from the baseline clinical model plus individual-level social factors (e.g., household income, reliance on Supplemental Security Income, Social Security Disability Insurance, Temporary Assistance for Needy Families, and English proficiency) and area-level social factors derived from patient residential FIPS county code (e.g., poverty rate, population density, and per capita rates of substance use treatment, mental health services, and urgent care facilities).

We employed an Extreme Gradient Boosting (XGBoost) algorithm for both model sets44,45,46,47. We selected XGBoost for its capacity to model non-linear relationships and interactions between diverse clinical and social features. In prior work using the same T-MSIS Medicaid dataset48, XGBoost outperformed Random Forest, logistic regression, and regularized regression in predicting acute care utilization. Given its superior empirical performance in this context, we selected XGBoost while recognizing the trade-offs in interpretability.

To evaluate model performance and minimize overfitting, we implemented a standard 60/20/20 split for training, validation, and test sets, respectively. The validation set was used to tune hyperparameters during training, and the test set was preserved exclusively for final performance evaluation. Hyperparameters were optimized using a targeted tuning method described by Van Rijn and Hutter to enhance feature selection within the XGBoost framework (details in Supplementary Note 7)49. Although we did not implement nested cross-validation due to computational constraints within the CMS secure environment, we applied early stopping and regularization to mitigate overfitting. We acknowledge that relying on a single train/validation/test split may result in optimistic performance estimates. We benchmarked both models against a null model of random prediction using Monte Carlo simulation (n = 1000 iterations).

Performance Measures

Following standard TRIPOD guidelines, we evaluated model performance using metrics relevant to identifying patients at high risk of non-closure of a quality gap. Primary performance metrics included AUROC, F1-score, sensitivity, specificity, positive predictive value (PPV), negative predictive value (NPV), and the Matthews Correlation Coefficient (MCC), which ranges from -1 to +1 (where -1 indicates total disagreement between prediction and observation and +1 represents perfect prediction)50. We estimated 95% confidence intervals for each metric using bootstrapping with 1000 replications. Accuracy was reported for completeness but was not used as the primary metric due to class imbalance.

We compared the predictive power of the social determinants model to random selection targeting for closing care gaps. Using model-specific sensitivity and specificity values, we estimated open gap rates, effective closure rates, and the number of outreach attempts required to close one gap, assuming a typical 20% success rate per outreach attempt in engaging patients to close their care gaps51,52. This analysis provides a population-level estimate of the social determinants model’s potential impact on improving quality measure completion rates compared to random targeting. To reflect the uncertainty in outreach success, we conducted a sensitivity analysis assuming lower success rates (5%, 10%, and 15%), reported in Supplement Table 6.

Variable Importance

To understand the relative contribution of individual-level and area-level social determinants features in predicting quality measure non-completion, we assessed feature importance using the Gini index. Calculated within the XGBoost framework, the Gini index quantifies the average gain in purity (reduction in variance) achieved by splitting data based on a given feature across all decision trees in the ensemble. Features with higher Gini index values are considered more influential in the model’s predictions. For each of the nine outcome measures, we ranked all features (clinical and social determinants variables) by their Gini importance scores. To facilitate comparison across outcome measures and between feature types, we normalized the Gini importance scores to a 0–100 scale by dividing each score by the maximum Gini importance observed across all features for that specific outcome measure53. We then examined the top ten features for each outcome measure to identify the most salient clinical and social factors associated with quality measure non-completion.

Assessing the Potential Impact of Social Determinants Improvement

To explore how model predictions vary under hypothetical improvements in social determinants of health, we conducted model-based simulations (Supplementary Note 8). These simulations do not estimate causal effects but provide illustrative counterfactual scenarios with changes in input features. We compared predicted probabilities of quality measure completion before and after hypothetically improving each social determinant variable, simulating a scenario with reduced social barriers. For the nine county-level variables, we first predicted quality measure completion using the held-out test set. We then created a modified version of this test set, where each member’s county-level social measures were set to their 75th percentile value within our sample of 1563 counties. Values already at or above the 75th percentile remained unchanged. We selected this percentile a priori to represent substantial, but achievable, improvements in county-level social conditions.

For the five individual-level social variables (household income, reliance on Supplemental Security Income, Social Security Disability Insurance, Temporary Assistance for Needy Families, and English proficiency), we simulated improvement by shifting members from the lowest category to the next highest category. Using a dataset that incorporated all transformations (both county-level improvements to the 75th percentile and individual-level category shifts), we then re-generated model predictions to examine how estimated outcome probabilities shifted under hypothetical improvements.

We conducted two sensitivity analyses: (1) univariate analyses, adjusting each social determinant variable individually to isolate its effect, and (2) a multivariate analysis concurrently adjusting all social variables to estimate their combined impact. For each outcome measure and analysis, we calculated the relative and absolute percentage point change in predicted quality gap closure attributable to the hypothetical social determinant improvements.

Evaluation of Potential Bias and Model Robustness

To assess potential biases and the robustness of our findings, we conducted several analyses (Supplementary Note 9). First, we evaluated racial/ethnic biases in the predictive models using the equalized odds method54. This approach examines whether the models exhibit differential predictive performance across racial/ethnic subgroups. Specifically, equalized odds assesses whether the probability of a prediction (i.e., receiving preventive care) is the same or different between groups, among those with the true outcome (i.e., they actually received the care). This method is particularly valuable for healthcare applications, as it ensures balanced true positive rates across racial/ethnic groups, preventing systematic under-identification of quality measure needs in historically marginalized populations.

We also conducted a sensitivity analysis using six-month intervals for defining quality measure completion (Supplementary Note 10). This addressed the potential for unobserved time-varying confounding due to care management programs, which may intensify outreach later in the year based on eligibility file updates. To examine potential selection bias introduced by the continuous enrollment criteria (36 months), we compared the baseline demographics of the included sample to those of the broader Medicaid population in our dataset enrolled for at least one month in 2017. This comparison evaluated the generalizability of our findings to a less stringently defined population and assessed the likelihood of biased predictions for those outside our 36-month sample.

To evaluate the robustness of model performance across subpopulations with more versus less data availability, we conducted a sensitivity analysis stratifying patients into low, medium, and high utilization tiers based on the volume of claims observed in the baseline period (Supplementary Note 10). For each model, we computed standard performance metrics (e.g., AUC, F1, sensitivity, specificity) separately within each utilization stratum. This allowed us to assess whether performance was disproportionately driven by high-utilization patients and to identify potential limitations in generalizability to patients with sparse data.

link